Oracle’s recent market performance is not a reflection of traditional software-as-a-service (SaaS) growth metrics, but rather a fundamental repricing of its physical infrastructure footprint. The rally following its recent earnings report stems from a shift in investor perception: Oracle is no longer viewed as a legacy database provider attempting to catch up to the "Hyperscale Three" (AWS, Azure, GCP), but as a specialized high-performance computing (HPC) utility. The core of this thesis rests on the decoupling of cloud demand from general enterprise spend, specifically driven by the non-linear scaling requirements of Large Language Models (LLMs).

The Three Pillars of the Oracle Infrastructure Pivot

To understand the sudden investor confidence, one must categorize Oracle’s strategy into three distinct operational pillars that differentiate its Cloud Infrastructure (OCI) from its larger competitors.

1. The RDMA Networking Advantage

Standard cloud architectures often struggle with the latency overhead inherent in virtualized environments. Oracle’s early bet on Remote Direct Memory Access (RDMA) over Converged Ethernet (RoCE) allows GPUs to communicate directly with one another without involving the CPU or the heavy overhead of the operating system stack.

In the context of training runs for models like those developed by xAI or NVIDIA, this reduces the "tail latency" that often bottlenecks massive distributed training clusters. By offering "bare metal" instances—where customers rent the physical hardware without a hypervisor layer—Oracle provides a performance profile that more closely resembles an on-premise supercomputer than a traditional public cloud.

2. Strategic Interoperability and the "Cloud Without Walls"

Oracle has reversed its historical stance on proprietary ecosystems by aggressively pursuing multi-cloud agreements with Microsoft Azure and Google Cloud. This is a tactical recognition of the "Gravity of Data." By placing Oracle Database hardware physically inside or adjacent to Azure and GCP data centers, they eliminate egress fees and latency issues that previously forced customers to migrate away from Oracle. This preserves their high-margin database revenue while capturing the "exhaust" from other clouds’ AI workloads.

3. Modular Data Center Deployment

While AWS and Google focus on massive, multi-hundred-megawatt availability zones, Oracle has optimized for modularity. Their ability to deploy "Sovereign Clouds" and smaller-footprint data centers allows them to enter Tier 2 markets or meet strict national data residency requirements faster than competitors who require larger land parcels and more complex power grids.

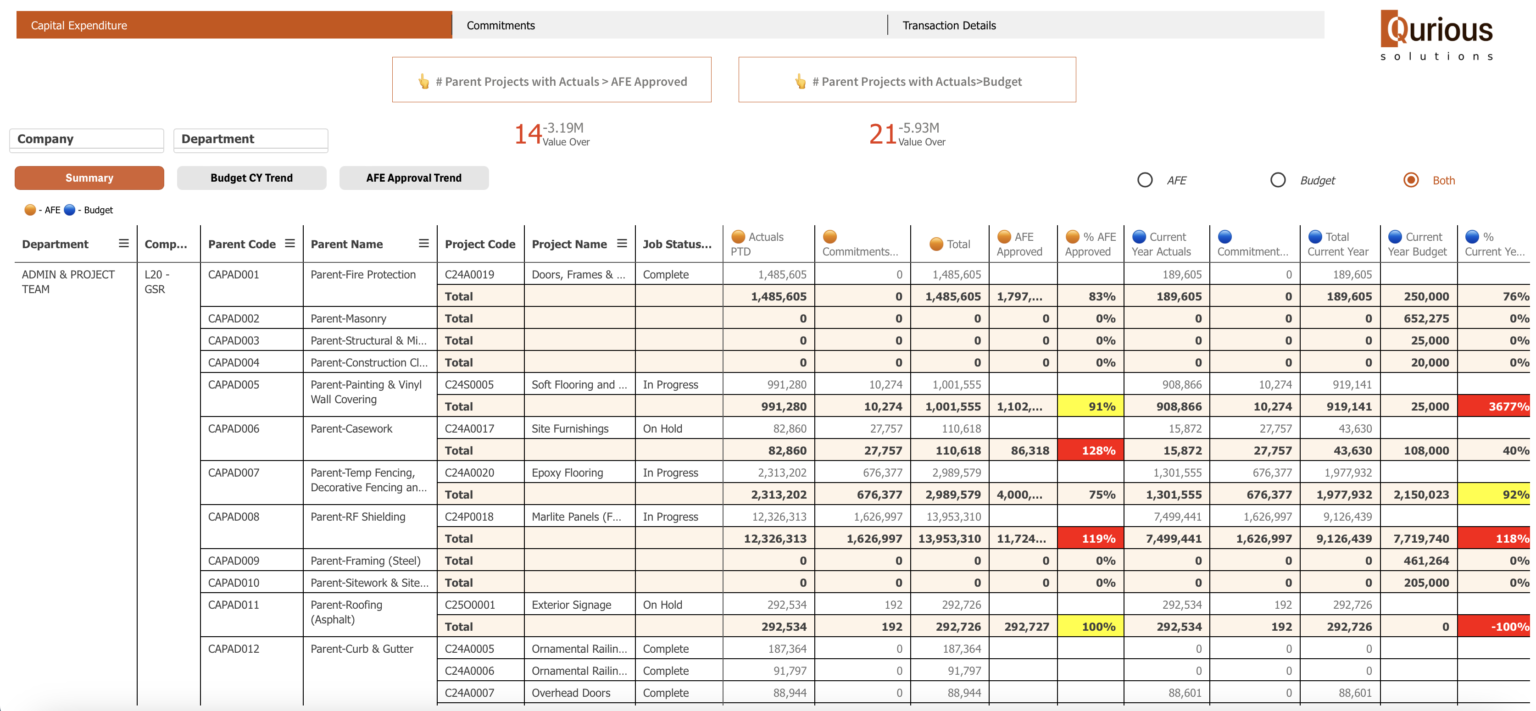

The Cost Function of AI Scaling

The primary risk factor cited by skeptics is the massive increase in Capital Expenditure (CapEx). However, analyzing the cost function of AI infrastructure reveals why this spend is viewed by the market as a "productive debt."

The total cost of ownership ($TCO$) for an AI data center is defined by the relationship between hardware procurement, power density, and utilization rates:

$$TCO = \sum (C_{cap} + C_{ops} + C_{energy}) \times \frac{1}{U}$$

Where:

- $C_{cap}$: Capital cost of H100/B200 clusters and high-speed networking.

- $C_{ops}$: Operational costs including specialized cooling systems.

- $C_{energy}$: The escalating cost of per-rack power.

- $U$: The utilization rate of the cluster.

Oracle’s advantage in this equation is $U$. Because demand for GPU compute currently exceeds supply, Oracle’s "built-to-suit" approach for large-scale customers like NVIDIA ensures that capacity is pre-sold before the concrete is poured. This removes the "speculative build" risk that typically plagues infrastructure cycles.

Deconstructing the Backlog: RPO vs. Revenue

The market’s enthusiasm is specifically tied to the growth in Remaining Performance Obligations (RPO). While current revenue growth remains steady, RPO acts as a leading indicator of the "revenue ramp."

The delta between $RPO$ and current recognized revenue represents a backlog of contracted demand that is currently constrained only by physical build-out speeds. The bottleneck is no longer customer acquisition; it is the supply chain of power transformers, liquid cooling systems, and specialized chips. Oracle’s management has signaled that their backlog is increasingly comprised of multi-year, multi-billion-dollar commitments, which provides a high-visibility floor for future earnings.

Structural Constraints and Execution Risks

The transition from a software-margin business (80%+) to an infrastructure-heavy business (30-40% GAAP margins for hardware-heavy cloud) introduces structural challenges that the current rally may be discounting.

The Power Density Bottleneck

Modern AI clusters require power densities of 60kW to 100kW per rack, compared to 10kW to 15kW for traditional enterprise workloads. This shift requires a total redesign of cooling systems, moving from air-cooled to liquid-to-chip cooling. Oracle’s ability to retro-fit existing data centers is limited; their growth is entirely dependent on greenfield builds that can support this thermal load.

Concentration Risk

A significant portion of Oracle’s cloud growth is driven by a handful of massive AI startups and partnerships. If the "AI bubble" corrects—specifically if LLM providers fail to find sustainable monetization pathways—Oracle faces the risk of stranded assets. High CapEx investments are "sunk costs" that cannot be easily repurposed for lower-margin commodity web hosting if the AI demand curve flattens.

The Depreciation Drag

As Oracle accelerates its CapEx, the depreciation and amortization (D&A) expenses will eventually hit the income statement. In the short term, this is often masked by EBITDA growth, but long-term profitability requires that the revenue generated by these chips outlasts their useful life. Given the rapid iteration cycle of AI hardware (e.g., the move from NVIDIA’s Hopper to Blackwell architecture), there is a significant risk of hardware obsolescence before the assets are fully depreciated.

The Displacement of Legacy Database Revenue

A critical component of the Oracle narrative is the stabilization of its legacy business. For a decade, the "Oracle is dying" thesis was predicated on the migration of databases to open-source alternatives like PostgreSQL or cloud-native options like Amazon Aurora.

However, the complexity of enterprise data—governance, security, and integration—has proven stickier than anticipated. Oracle has leveraged this by integrating AI "vectors" directly into its flagship database. This allows enterprises to run Retrieval-Augmented Generation (RAG) on their existing data without the risk of moving it to a third-party vector database. This "data proximity" strategy is the primary defense against churn.

Strategic Allocation of Capital

Oracle is currently executing a "Liquidity Capture" strategy. By utilizing its massive cash flow from legacy software maintenance, it is funding the transition to a hardware-centric cloud provider. This is effectively a leveraged bet on the permanence of the AI compute cycle.

The investment logic follows a specific sequence:

- Secure Power and Land: Locking in long-term power contracts is the new "land grab."

- Vertical Integration: Designing custom silicon (Ampere-based CPUs) to reduce reliance on third-party chip margins.

- Automated Operations: Using "Autonomous Database" technology to reduce the headcount required to manage massive scale, protecting margins against labor inflation.

The Competitive Equilibrium

Oracle does not need to defeat AWS to be successful. The market for AI compute is currently non-zero-sum. As long as the demand for model training and inference grows at its current rate, Oracle can thrive as the "HPC Specialist" while AWS and Azure handle the broader "General Purpose" enterprise workloads.

The real test will occur when the market shifts from training (where Oracle’s RDMA networking is a massive advantage) to inference (where geographical distribution and edge presence matter more).

The strategic play for Oracle is to maximize the speed of its data center deployments while the "cost of compute" remains high. Once compute becomes commoditized—a process that typically takes 5 to 7 years in any technology cycle—Oracle must have already converted its infrastructure customers into long-term platform-as-a-service (PaaS) users. The current rally is a recognition of Oracle's lead in the first half of this race; the second half will be determined by whether they can maintain their premium pricing once the hardware supply catch up with demand.