Sarah sits in a cramped office in suburban Ohio, her eyes reflecting the pale blue glow of a spreadsheet that seems to stretch into infinity. She is an inventory manager for a mid-sized retail chain. Her job, stripped of its corporate varnish, is to guess the future. If she orders too many parkas and the winter is mild, the company bleeds cash. If she orders too few and a polar vortex hits, she loses customers to the giant down the street.

For fifteen years, Sarah relied on "gut feel" and a patchwork of historical data. Today, she clicked a button labeled Optimized Forecast. Within three seconds, a system she doesn't fully understand told her to cut her wool sock order by 22% and double her stock of moisture-wicking base layers.

She felt a brief flicker of resentment. Then she felt something else: relief.

This is the quiet, heartbeat-thumping reality of what the industry calls Predictive Analytics. While the headlines scream about robots taking over the world or sentient machines writing poetry, the real revolution is happening in the mundane. It is happening in the sock aisle. It is happening in the way a bank decides if you deserve a mortgage. It is happening in the hospital wing where a nurse receives an alert about a patient’s vitals before the patient even feels a twinge of pain.

Data is no longer a rearview mirror. It has become a lighthouse.

The Ghost in the Machine

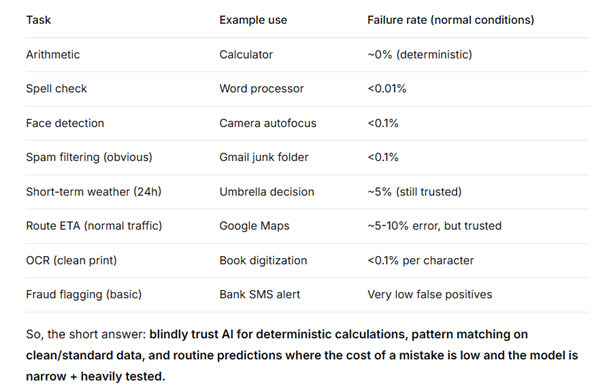

To understand how we reached this point, we have to look past the buzzwords. At its core, this technology is a sophisticated pattern-matching engine. Think of it like a seasoned birdwatcher who can spot a hawk from a mile away, not because they have supernatural vision, but because they have seen ten thousand hawks and know the specific, jagged rhythm of their wings.

When a company "analyzes data," they are essentially feeding millions of these wing-beats into an algorithm. The machine doesn't "know" what a hawk is. It just knows that when Pattern A and Pattern B collide, Event C is 89% likely to follow.

Consider a hypothetical traveler named Elias. Elias is booking a flight to London. To the airline’s system, Elias isn't just a name; he is a constellation of data points. He prefers window seats. He usually books twelve days in advance. He almost always buys a coffee at the terminal. By analyzing Elias—and a million people who look and act just like him—the airline can predict exactly how much he is willing to pay for an upgrade the moment he opens the app.

It feels like magic. Or, depending on your perspective, it feels like an intrusion.

The friction between efficiency and privacy is the defining tension of our era. We love that our streaming service knows exactly which obscure 1970s Italian horror movie we want to watch on a rainy Tuesday. We are significantly less comfortable when a credit card company predicts a divorce based on a change in spending patterns before the couple has even seen a counselor.

The Weight of a Percent

We often treat these systems as objective oracles. "The data says..." is a phrase used to end arguments in boardrooms across the globe. But data is not a neutral force of nature like gravity. Data is a collection of human choices, biases, and historical accidents.

If you train a predictive model on thirty years of hiring data from a company that primarily hired men, the model will "learn" that being a man is a prerequisite for success. It won't tell you it's being sexist. It will simply tell you that, mathematically, the "best" candidates happen to have deep voices and an affinity for golf.

This is where the human element becomes vital. We cannot outsource our ethics to an equation. Sarah, our inventory manager in Ohio, knows that a local high school football team just made the state championships. She knows that every fan in town is going to want team-colored scarves next week. The algorithm, looking at five years of regional weather patterns, sees no reason for a spike in scarf sales.

Sarah overrides the system. She trusts the math, but she honors the context.

The most successful organizations are not the ones with the fastest processors or the largest datasets. They are the ones that understand the "Why" behind the "What." They recognize that a 70% probability is still a 30% chance of being dead wrong.

The Cost of Certainty

There is a psychological price to pay for living in a world that is increasingly predicted. We are losing the art of the pivot. When the path is laid out for us—from the GPS route that saves us four minutes to the "Recommended for You" list that dictates our taste—the muscle of serendipity begins to atrophy.

Think about the last time you wandered into a bookstore and found a life-changing novel by total accident. Or the last time you took a wrong turn and discovered a hidden cafe. These moments are "inefficient." Predictive systems are designed to eliminate them. They want to smooth out the bumps, fill in the potholes, and ensure that your experience is as frictionless as possible.

But life is found in the friction.

In the medical field, the stakes of this friction are literal. Predictive algorithms can now identify the early markers of sepsis hours before clinical symptoms appear. In this context, "frictionless" means a life saved. It means a father going home to his children instead of a tragedy. Here, the predictive architect is a hero.

But what happens when those same tools are used to "predict" which employees are likely to quit, leading to those individuals being passed over for promotions or alienated before they’ve even made a decision? The tool hasn't just predicted the future; it has forced it.

The Human at the Center

We are currently standing in the middle of a massive, silent transition. We are moving from a world governed by "What happened?" to one governed by "What will happen?"

This shift requires a new kind of literacy. It's not enough to know how to use the tools; we have to know when to doubt them. We have to be willing to ask the uncomfortable questions:

- Where did this data come from?

- Who benefits if this prediction is right?

- Who suffers if it’s wrong?

The invisible architect is already at work. It is shaping the cities we live in, the food we eat, and the way we interact with our own health. It is a powerful, shimmering mirror of our collective behavior.

Sarah closed her laptop. The office was dark now, save for the streetlights reflecting off the wet pavement outside. She had followed the machine’s advice on the base layers, but she’d doubled down on those team-colored scarves. She felt a strange, quiet confidence.

The machine provided the map. But she was still the one driving.

The future isn't something that happens to us. It is a series of probabilities that we collapse into reality through our own agency. We are not just data points in someone else’s model. We are the glitch in the system, the unexpected choice, and the only ones capable of looking at a 99% certainty and choosing the 1% anyway.

The light in the office flickered off. Outside, the world remained stubbornly, beautifully unpredictable.