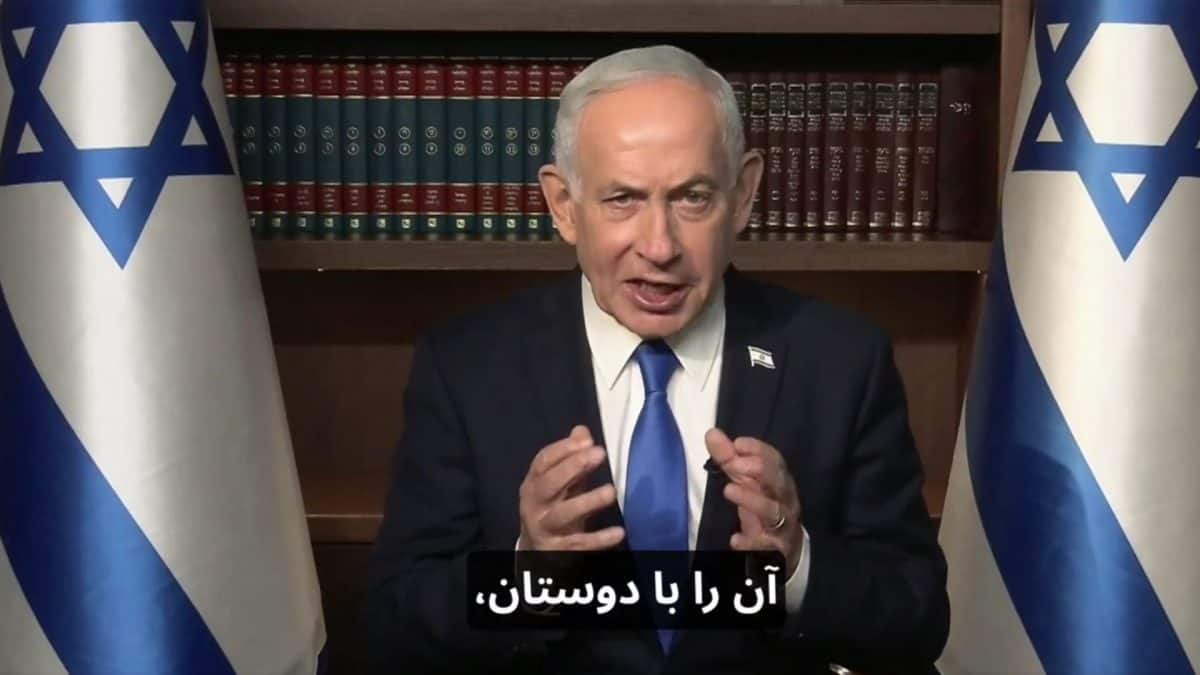

The intersection of biological health rumors and high-fidelity digital communication creates a strategic vacuum where traditional statecraft fails to maintain narrative control. When Israeli Prime Minister Benjamin Netanyahu released a video address to the Iranian people during the Festival of Lights, the objective was not merely cultural outreach but a calculated display of physical and political presence. However, the subsequent "fake" or "AI-generated" allegations from online cohorts demonstrate a fundamental shift in the cost of credibility. In the current information environment, the burden of proof has migrated from the claimant to the subject, creating a "Liar’s Dividend" where any authentic communication can be dismissed as a deepfake to serve a specific geopolitical agenda.

The Triad of Digital Authenticity Verification

The skepticism surrounding the Prime Minister's video is not a localized incident but a symptom of the erosion of the "digital gold standard" for video evidence. To evaluate why a state-sanctioned video can be successfully branded as fraudulent by decentralized actors, we must analyze the three vectors of perception:

- Technical Artifacting: This involves the presence of visual "glitches" or unnatural movements—such as inconsistent blinking or lip-sync misalignment—that suggest generative AI. Even if these are actually results of standard compression algorithms or low-bitrate streaming, they are now weaponized as proof of fabrication.

- Contextual Divergence: This is the gap between the expected physical condition of a subject (e.g., rumors of hospitalization or death) and the presented visual data. If the delta between the rumor and the video is too wide, the audience defaults to a "seeing is not believing" heuristic.

- Algorithmic Amplification: The speed at which "fake" allegations propagate is accelerated by bot networks and ideologically aligned clusters. Once the "AI" label is attached to a video, the technical reality of the footage becomes secondary to the social momentum of the claim.

The Mechanism of the Liar’s Dividend

The "Liar’s Dividend" is a structural vulnerability in modern discourse where the mere existence of deepfake technology makes it easier for people to deny the reality of genuine events. In the case of Netanyahu’s address, the rumor mill regarding his health created a high-incentive environment for his detractors to categorize the video as a digital ghost.

This creates a tactical bottleneck for state actors. If a leader is truly incapacitated, a deepfake could theoretically bridge the gap. Because this possibility exists, every legitimate appearance is now subjected to forensic scrutiny by untrained observers. This decentralized "forensics" often relies on pareidolia—the tendency to see patterns (like skin texture smoothing) where none exist, or where they are simply the result of professional studio lighting and post-production touch-ups.

Information Asymmetry and State Response Functions

The Israeli government’s strategy of direct-to-camera addresses is designed to bypass traditional media filters and speak directly to an adversary's population. This is a form of cognitive maneuver warfare. The Iranian "Festival of Lights" (Hanukkah) framing was intended to draw a parallel between the Jewish struggle for liberation and the internal dissent within Iran.

However, the efficacy of this maneuver is negated when the medium itself becomes the target of the attack. When netizens label a video "fake," they are not necessarily making a technical claim; they are performing a political act of de-legitimization. The state’s response function is typically to ignore the claims, as responding to "fake" allegations can inadvertently validate the rumor by giving it oxygen. This creates a paradox:

- Action: Release video to prove vitality and presence.

- Reaction: Opponents claim the video is AI-generated.

- Result: The ambiguity persists, and the original goal of "proving vitality" is only partially met, as the skeptical cohort remains unconvinced.

The Infrastructure of Modern Rumor Propagation

To understand why "death rumors" gain such traction despite video evidence, one must look at the supply chain of misinformation. It follows a predictable trajectory:

- The Information Gap: A period of public absence by a leader (due to surgery, strategic retreat, or routine scheduling) creates a vacuum.

- The Seed: A single unverified post on a platform like X or Telegram claims a "high-level source" reports a health crisis.

- The Corroboration Loop: Other accounts cite the first post as evidence. "People are talking about X" becomes the new headline, shifting the focus from the fact to the conversation about the fact.

- The Visual Counter-Strike: The state releases a video.

- The Denial Phase: The video is analyzed for "tells." Common targets include the ears (which AI historically struggles with), the reflection in the pupils, and the cadence of speech.

In the Netanyahu case, the technical quality of the video—likely shot in a high-end studio with professional lighting—actually worked against it. High-production values can mimic the "uncanny valley" effect associated with high-end generative models. A lower-quality, "raw" cell phone video often carries more "authenticity capital" in the current era than a polished address.

Quantifying the Impact of AI Skepticism on Geopolitics

The strategic cost of this skepticism is measurable in the erosion of a state's "deterrence by communication." If a leader cannot prove they are alive and in control via video, the traditional methods of projecting power during a crisis are compromised.

We can categorize the levels of digital proof required in the 2020s:

- Tier 1: Controlled Broadcast: Professional studio, high-bitrate. (Highest vulnerability to "AI" claims).

- Tier 2: Live Stream with Interaction: Reading current headlines or responding to live prompts. (High proof value, but difficult to execute for high-security leaders).

- Tier 3: Multi-Angle Third-Party Verification: Leader appearing in footage captured by independent journalists. (Strongest proof, but relies on third-party access).

The Netanyahu video falls into Tier 1. In a high-friction environment like the Israel-Iran shadow war, Tier 1 communication is increasingly insufficient to quell sophisticated misinformation campaigns.

The Role of Metadata and Digital Signatures

A missed opportunity in this specific outreach was the lack of verifiable metadata or "Content Credentials" (C2PA). As we move deeper into the decade, state-level communications will likely require a cryptographic layer to be considered authentic.

Digital watermarking and blockchain-based timestamps provide a mathematical proof of origin. If the Israeli Prime Minister’s office had released the video with an embedded C2PA manifest, technical analysts could have verified that the pixels were captured by a specific camera at a specific time and had not been synthetically altered. The absence of these tools leaves the narrative to be decided by the loudest voices in the comments section rather than by verifiable data.

Psychological Operations and the Iranian Audience

The intended audience—the Iranian public—is already conditioned to be skeptical of state-run media, both their own and their adversaries'. This double-layered skepticism creates a fertile ground for "fake" allegations. If an Iranian citizen is told by their government that an Israeli video is a Mossad-produced deepfake, they are likely to believe it, not because they trust their government, but because the concept of "fake news" has become a universal shield against uncomfortable information.

The video’s timing, centered on a religious/cultural crossover, was an attempt to leverage soft power. But soft power requires a hard foundation of truth. When the medium of the message is successfully attacked, the message itself—wishing Iranians a festival of lights and advocating for a future free of the current regime—is discarded as part of the "simulation."

Strategic Recommendation for State Communication in the Generative Era

The pivot from polished studio addresses to "verifiable presence" is now a security imperative. To neutralize the Liar’s Dividend and the threat of AI-denialism, state actors must adopt a multi-modal verification strategy.

The most effective method to counter "death rumors" is no longer the scripted video, but the "unscripted intersection." This involves the leader appearing in a context they do not control—such as a brief walk-and-talk in a public space or a meeting with a foreign dignitary who provides their own documentation of the event. The goal is to maximize "environmental noise"—unpredictable background elements that are computationally expensive or impossible to perfectly replicate in a deepfake.

Furthermore, the integration of cryptographic "proof of personhood" in digital releases must become standard. Until state communications are signed with the same level of security as financial transactions, they will remain vulnerable to the "fake" label. The era of believing what we see on a screen is over; the era of believing what we can mathematically verify has begun. The failure of the Netanyahu video to silence rumors is a case study in the obsolescence of the traditional televised address as a tool of crisis management.

State entities must now treat every public appearance as a data-integrity challenge. This requires a shift in personnel from traditional PR specialists to information security and forensic media experts who understand how to "harden" a video against the inevitable claims of synthesis. The battle is no longer for the hearts and minds of the audience through the message, but for the optical nerves of the audience through the verification of the medium.

To regain the narrative edge, the next phase of communication must prioritize raw, multi-source, and cryptographically signed data over high-production-value messaging. This move toward "radical transparency" in the technical sense is the only viable defense against the decentralization of misinformation.