The federal government wants to "vet" your code before the world sees it.

Standard reporting on the White House's recent maneuvers regarding AI model weights and pre-release audits treats this like a boring safety inspection—equivalent to checking the brakes on a new Ford. They frame it as a prudent, "better safe than sorry" approach to existential risk.

They are wrong.

Vetting isn't about safety. It’s about permission. By shifting from a post-market liability framework to a pre-market licensing regime, the U.S. is inadvertently building the very digital iron curtain it claims to oppose. If you think a committee of career bureaucrats can outpace the red-teaming capabilities of a million global open-source developers, you haven't been paying attention to the last thirty years of software history.

The Myth of the "Controlled" Release

The core fallacy driving the White House's logic is the belief that an AI model is a physical weapon. It isn't. It is math. It is a file.

When the government talks about "vetting," they are talking about mandated delays for "dual-use" foundations. In the industry, we call this the "Safety Theater Tax." I have watched teams burn $500,000 a month in compute credits while waiting for legal clearances that add zero technical value.

Here is the truth: A model that is "safe" in a lab is never safe in the wild. The moment you release a weights-file, the global community finds the jailbreaks. Attempting to "vet" a model to 100% certainty before release is like trying to proofread the internet before letting people use Google. It is a logistical impossibility that only serves to stall domestic innovation while our adversaries—who don't care about ethics committees—run full tilt toward the scaling ceiling.

Regulatory Capture in a Trench Coat

Who actually benefits when the government demands a rigorous, expensive, multi-month vetting process?

Not the consumer. Not the startup in a garage.

The winners are the three or four "hyperscalers" who already have the lobbying budget to navigate the Department of Commerce’s maze. If you make it illegal or prohibitively expensive to release a model without a federal stamp of approval, you aren't protecting the public from Skynet; you are protecting the incumbents from competition.

This is classic regulatory capture. The big players want the regulation. They want the moat. They want a world where a small team with a breakthrough architecture can't disrupt the market because they can't afford the $10 million compliance audit required to hit "publish."

Open Source is the Only Real Defense

The "lazy consensus" argues that open-sourcing powerful models is dangerous because it gives "bad actors" a blueprint.

Let’s dismantle that.

If the U.S. restricts open-source AI, the bad actors don't stop. They just use the leaked versions of our models or build their own. Meanwhile, the "good guys"—the researchers, the independent security auditors, the medical tech startups—are left with nothing but crippled, "vetted" APIs that refuse to answer half their queries because of over-active safety filters.

Security through obscurity is a failed philosophy. In the world of cryptography, we learned this decades ago: the only way to make a system secure is to let everyone try to break it. By forcing models into a secret government vetting silo, we lose the "Linus’s Law" effect: Given enough eyeballs, all bugs are shallow. If we want AI that won't build a bioweapon, we need ten thousand independent researchers looking at the weights, not ten bureaucrats in a basement in D.C.

The False Choice of the "Existential Risk" Narrative

The White House is being haunted by the ghost of "p(doom)."

Risk is real, but the current vetting proposals address the wrong kind. They focus on "frontier risks" that are largely theoretical while ignoring the immediate risks of centralization. Imagine a scenario where the only "vetted" AI models are those that align with the specific political or social biases of the current administration. That isn't a hypothetical; it's a certainty.

When you give a central authority the power to gatekeep intelligence, you are creating a single point of failure. If that gatekeeper is compromised, or simply incompetent, the entire ecosystem rots.

The Geopolitical Suicide Note

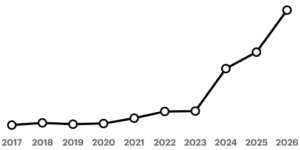

While we debate whether a model should be allowed to tell a joke about a politician, other nations are moving.

China’s approach to AI is explicit: state control for the sake of social stability. By adopting a vetting process, the U.S. is essentially adopting the Chinese model of "AI with Chinese Characteristics," just with a different coat of paint.

We are trading our greatest competitive advantage—chaotic, decentralized innovation—for the illusion of safety. You cannot win a race if you stop to ask a referee for permission every time you want to take a step.

Stop Vetting Code and Start Penalizing Harm

The solution isn't to vet the model; it's to hold the user and the developer accountable for the output and application.

We don't vet printing presses. We have libel laws.

We don't vet compilers. We have cybercrime laws.

If a model is used to facilitate a crime, prosecute the criminal. If a company knowingly releases a product that causes tangible, provable harm, sue them into the ground. But the moment we start vetting the "thought process" of a neural network before it's even deployed, we have conceded the future of the internet to the most risk-averse people on the planet.

The White House shouldn't be looking for a way to vet AI. They should be looking for a way to get out of the way before they turn the American AI industry into the American railway industry: stagnant, subsidized, and a century behind the rest of the world.

Move fast and break things? No. Move fast and fix them in the open. That is the only way forward. Anything else is just a slow-motion surrender.